A Journey Through the Production Lifecycle of Meta’s eSports Competitions and Fitness Challenges

The idea emerged from a brainstorming session with the goal of engaging Meta employees in a unique and interactive way. The concept centered on live-streamed eSports competitions and fitness challenges featuring popular VR games such as Beat Saber, Population: ONE, and Supernatural Fitness. This initiative was designed to foster camaraderie and highlight the diverse talents within Meta’s workforce.

With a clear vision in mind, the planning phase kicked off by outlining the necessary technical infrastructure and tools, including AWS cloud infrastructure, Virtual Machines accessed via Teradici PCoIP, vision mixing software like vMix 4K, Ross Graphite CPC, and NDI communication tools such as Screen Capture HX and Studio Monitor.

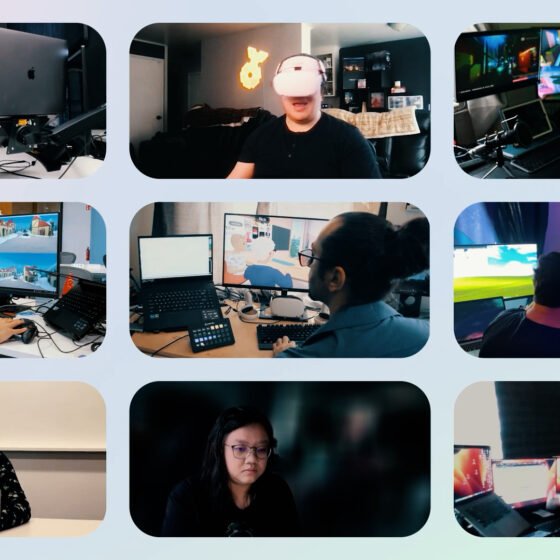

A cross-functional team was assembled under my leadership, drawing members from various departments, including the Meta Dogfooding organization, producers, directors, camera operators, XR technicians, and support staff. Each team member received a thorough briefing on their specific roles and the overarching vision for the production. The excitement was palpable as the virtual control room was meticulously set up with the required equipment. Virtual cameras were deployed within the gaming applications to capture the in-VR talents.

The AWS cloud infrastructure was configured to provide access to EC2 G5 instances, allocated as follows: one instance was dedicated to running vMix 4K, managed remotely by our Technical Director. This setup allowed the TD to stream the Program feed and send it to Streamshark, a streaming platform for everyone to watch. The camera feeds the TD was cutting from were sent by four camera operators, each running their own instance and spawning a virtual camera within the game itself. Each operator shared their display within our private network using NDI tools such as Screen Capture HX.

Two machines were dedicated to running Zoom calls, facilitating a cross-talk workflow that allowed the in-VR talent and the production team to communicate with each other seamlessly. Additionally, a team member wearing a Quest Pro 2 was assigned as the in-VR stage manager, responsible for welcoming talent as they teleported into the game and assisting them in prepping.

Viz Flowics was leveraged for HTML5 graphics, allowing us to create, customize, and animate HTML5 overlays during the live production straight from any browser without necessarily being connected to our AWS network. A streaming engineer monitored the stream in real time using Cloud-Based Communication VLink, flagging any bitrate issues or audio glitches for the TD to circumvent on the fly.

My extensive experience with the production lifecycle was key in running a successful livestream with so many technical challenges and aspects to manage. This comprehensive setup ensured a smooth and interactive experience for both participants and viewers, showcasing the diverse talents within Meta and fostering a sense of community.